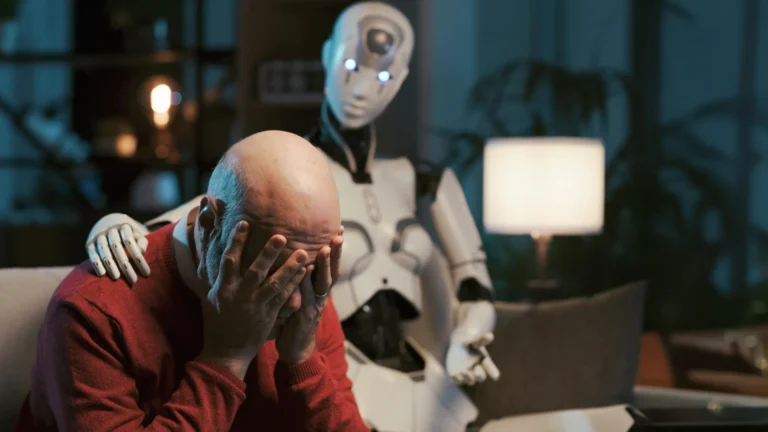

London – Artificial Intelligence has entered a controversial turning point in medical circles following escalating warnings about the risks of relying on “chatbots” as psychological assistants. Obviously, as of May 2026, the gap is widening between tech companies promoting these apps as quick support tools and psychiatrists who view them as “time bombs” that could threaten patient safety, especially in the absence of direct professional supervision and regulation over AI-generated responses.

“Diagnosis Without Feeling”: Why Specialists Fear Replacing Doctors with Machines?

Mental health experts clarified that the greatest danger lies in acute cases such as severe depression or suicidal thoughts, where software—no matter how advanced—fails to grasp the subtle emotional complexities a human doctor evaluates. Accordingly, any error in “therapeutic advice” provided by a robot could lead to catastrophic results. While developers argue they provide “primary support” for those with limited access to clinics, field reality indicates an over-reliance by users on these platforms as a final alternative to specialized treatment.

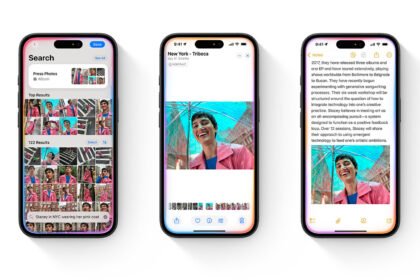

“Tech Ethics”: An International Race to Regulate “Digital Therapy”

Observers believe this escalating controversy has brought “AI Ethics” back to the forefront, with growing demands for strict international standards to ensure transparency and legal accountability for diagnostic errors. As a result, specialists emphasize that technical development must not precede medical safeguards, asserting that while AI can be an effective “assistant tool” under professional guidance, it will never possess the ability to replace human expertise and the emotional touch necessary for psychological recovery.